Remediate Everything

Prioritization decides the order — never what you leave broken

TL;DR: The AppSec industry treats “prioritize and forget” as mature judgment when it is actually an artifact of manual remediation being expensive. This article argues three things: that the logarithmic ROI curve is a lie the industry tells itself to avoid industrializing remediation; that the real cost of a fix is not four hours of engineering but the risk-acceptance ceremony that legalizes not doing it, which is more expensive; and that the tail of mediums and lows is where attacker chains live, so cherry-picking criticals is structurally insufficient. The practical conclusion: stop deciding what to leave broken and start building the preconditions — unified backlog, precise detection, micro-changes, reversible deploys — that make remediating everything cheap.

A few weeks ago I was on a call with the CISO of a mid-sized SaaS company. Smart guy, ten years in the industry, decent budget. His team had just closed a quarterly cycle: 3,847 open vulnerabilities. Eleven criticals. Sixty-four highs. The rest — the vast, humid rest — were mediums and lows that had been accumulating for years like sediment at the bottom of a reservoir.

His question was the one I have heard hundreds of times in fifteen years of application security:

“Which ones should we fix?”

I want to dedicate this article to destroying that question. Not the CISO — the question itself. Because that question, which sounds so reasonable and grown-up, is the polite face of a practice that is structurally wrong and economically regressive. The honest answer is: all of them. Deliberately, as part of your normal engineering cadence, forever, like brushing your teeth. If your first reaction is that this is economically insane, we have already identified the disease.

The logarithmic lie

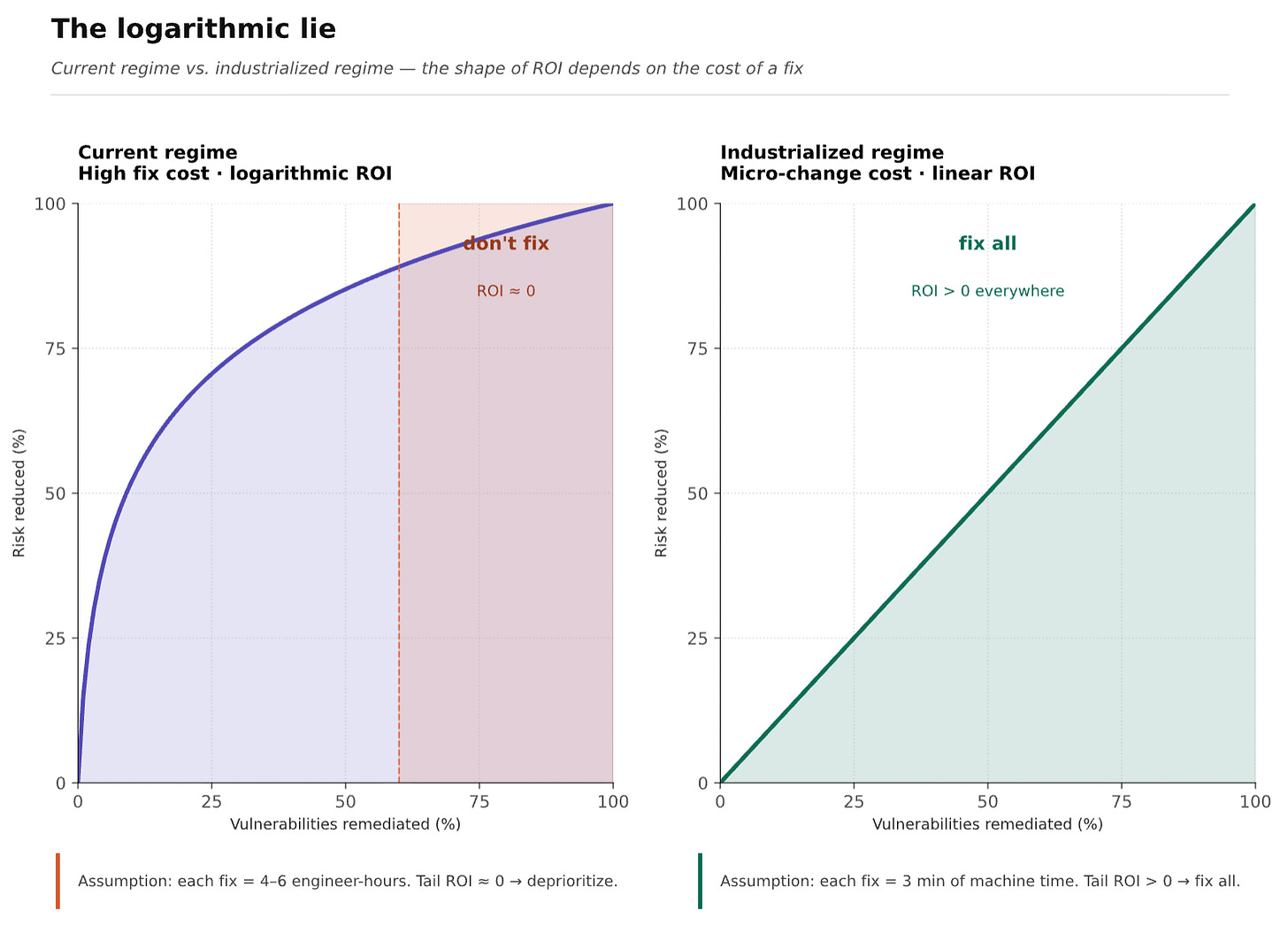

There is a graph in every vendor pitch deck. X axis: vulnerabilities remediated. Y axis: risk reduced. Curve logarithmic — steep at the beginning, flat at the tail. The takeaway is always the same: focus on the critical few, ignore the trivial many, that’s where the ROI lives.

I call this the logarithmic lie. Not because the math is wrong, but because the assumption that makes the math work is buried so deep almost nobody sees it.

The assumption is this: the cost of remediating each vulnerability is constant and high. That one assumption bends the curve. If remediation is expensive, yes, marginal fixes near the tail don’t pay for themselves. Prioritize, forget, go home.

But what if a fix is not a multi-week project? What if it is a micro-change — a dependency bump, a header added, an input sanitized — generated by an agent in ninety seconds, reviewed in thirty, merged behind a feature flag, deployed to one percent of traffic, reverted automatically if any signal degrades? The worst case is seconds of partial degradation for a small traffic slice, caught by the canary before anyone notices.

What does the logarithmic curve look like when each point costs three minutes of machine time and rollback is cheaper than the fix? It looks like a line. A slightly noisy line sloping gently upward, where every fix costs almost nothing. On that line, the ROI argument collapses. The reason you wouldn’t remediate finding number 3,000 stops being “diminishing returns” and becomes something simpler: you haven’t industrialized the work.

The Detroit problem, the aviation answer

Remediation is expensive because nobody remediates at scale, and nobody remediates at scale because remediation is expensive. A Nash equilibrium, and a particularly stupid one.

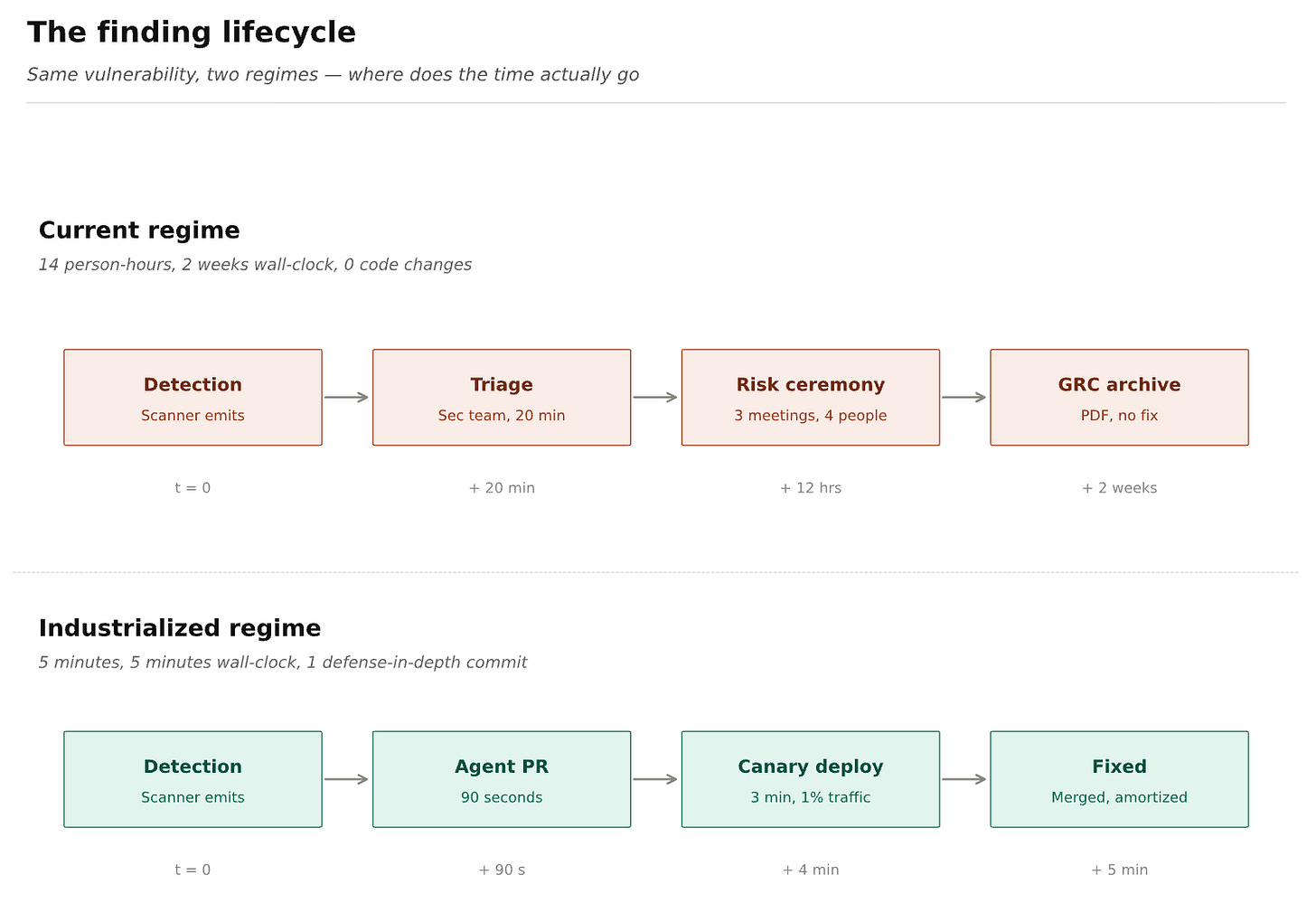

A senior engineer in Colombia at $15M COP/month costs closer to $23M once you count benefits and fiscal carry. When a finding from SAST, AI-SAST, SCA, Secret Scanning, or DAST lands in this organization, it sits in a queue because nobody owns it. When someone looks, they triage five findings to fix one real bug because false positive rates run 15-20%. The PR waits for review. CI takes 45 minutes. The deploy hides behind a forgotten feature flag. Four to six hours of engineer time per fix, across three people, two weeks of wall-clock. Call it $800K COP. Multiply by 3,000 mediums: $2400M COP no CFO will sign.

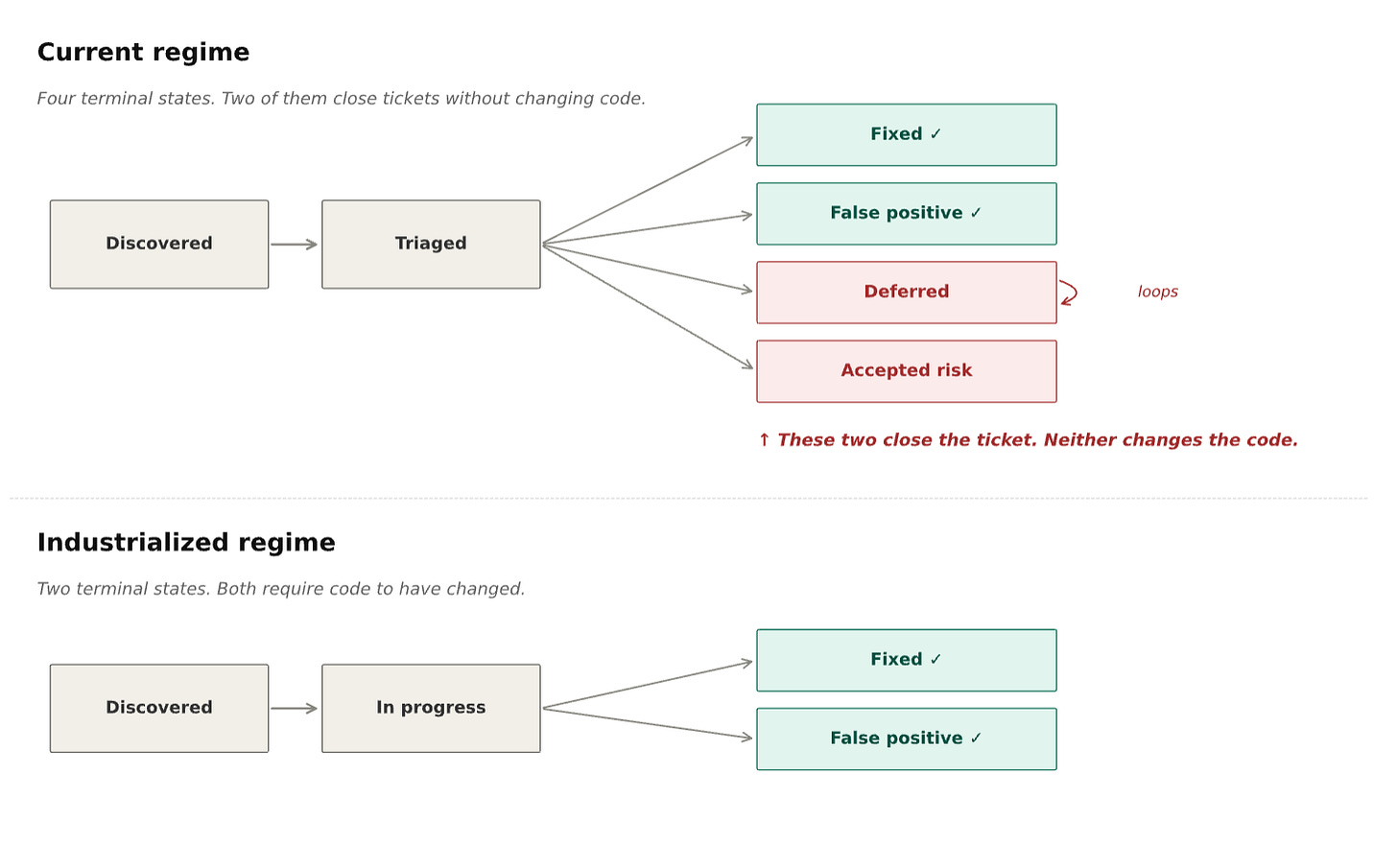

Except that is not the real comparison. The real comparison is four hours of engineer time versus the cost of deciding not to do it. That second column never appears in the board deck. It looks like this: three meetings of one hour with four people each — score the finding, debate exploitability, document compensating controls, sign off a formal risk acceptance. Two of the four are VPs whose fully-loaded cost is five times the programmer’s. Twelve person-hours to produce a PDF in a GRC tool saying we have legally decided not to fix this thing. No defense added. No attack surface reduced. Meanwhile the programmer who could have added a defense-in-depth input validation in thirty minutes was not invited. That would have been unprofessional.

I cannot say this politely. The AppSec industry has built an enormous, well-compensated apparatus whose function is to legalize not fixing vulnerabilities, and it is more expensive than fixing them. The ceremony of risk acceptance is the modern rework bay: a room full of salaried people managing the consequences of not doing the thing that, industrialized, would cost almost nothing.

Detroit made this mistake in the 1970s; Toyota proved them wrong over thirty years. But the model I want you to sit with is aviation. Commercial aircraft operate under continuous, legally-mandated long-tail maintenance — A-checks every 500 flight hours, D-checks every 6-10 years where they disassemble the plane. When any operator anywhere reports a defect, airworthiness directives force every aircraft of that model to inspect it. There is no triage meeting. You check it, fix it, log it, fly. The reason this works — the reason Bogotá-Madrid costs USD 800 on an aircraft carrying hundreds of millions of dollars of cumulative maintenance — is that aviation spent seventy years engineering the preconditions that make each event cheap: modular components, standardized tooling, exhaustive documentation, trained mechanics at every airport. Enormous cost paid once, amortized across every flight on earth.

AppSec has not paid that cost. And so we live in a world where “medium severity, low exploitability, deprioritize” passes for professional judgment, when the aviation equivalent — “slight hairline crack, low probability of structural failure, let’s skip it” — would end a career.

The backlog is one backlog

One point sabotages most organizations before they start. Security work does not live in a separate backlog. The moment you create “the security backlog” distinct from “the engineering backlog,” you have lost. Work parked there gets “when we have time” priority, which means never.

Remediation has to be feature work for the team that owns the code. Same backlog, same review standards, same Definition of Done. A dependency bump for a transitive CVE is a ticket in the same Jira project as the new checkout flow. The security team does not hand off work — it supplies validated, deduplicated findings into a system engineering already operates. Aviation does not have a separate “safety backlog” negotiated against flight operations. Safety is maintenance. Maintenance is operations. One system, one queue.

The preconditions we refuse to build

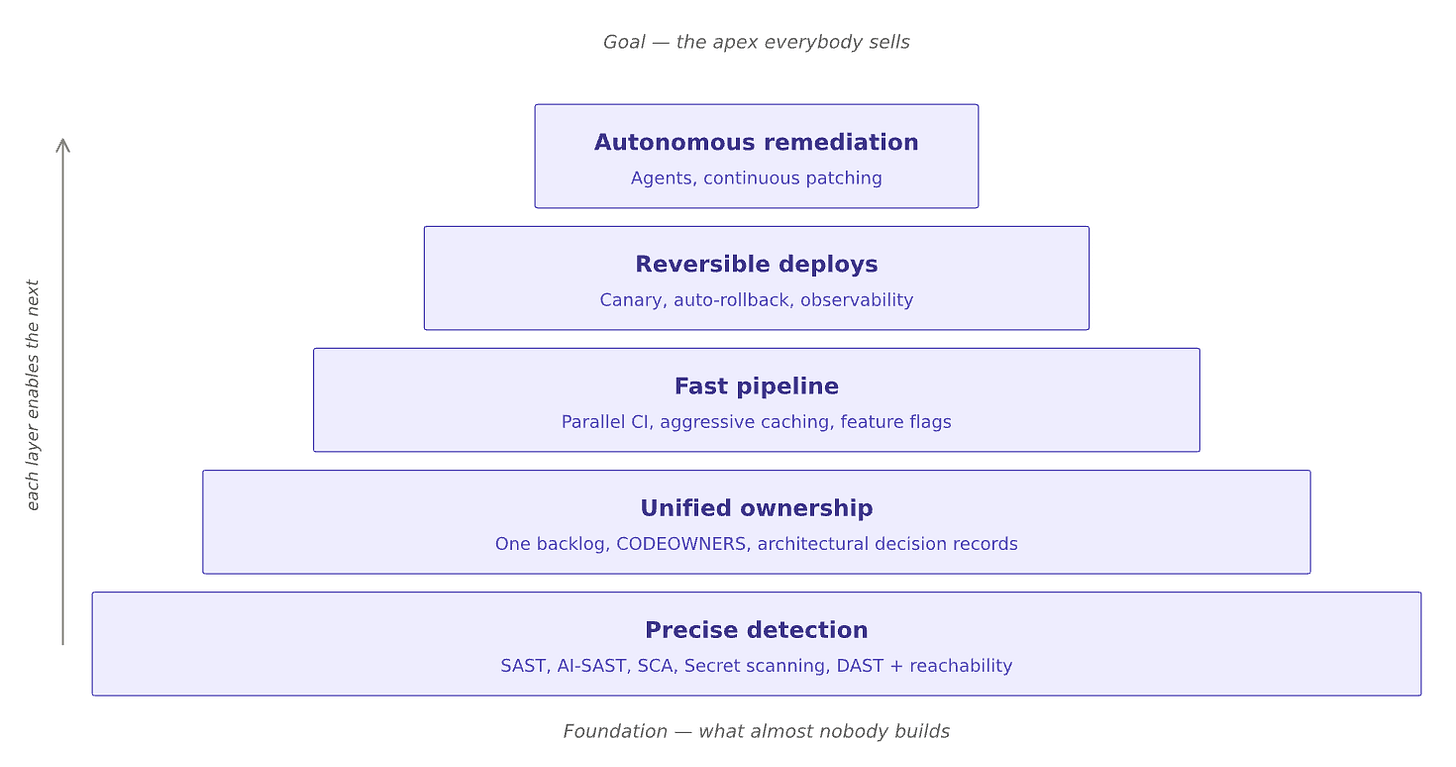

Every time I argue for remediate-everything, someone raises a valid objection. These objections are real, and every one describes a precondition the industry has chosen not to build:

“Our scanners are too noisy.” Fine. Industrialize scanner accuracy. Combine SAST, AI-SAST, SCA, Secret Scanning, and DAST with reachability analysis. Validate with humans. Drop false positives below 5%. This is not science fiction — some vendors already do it today.

“We’d destroy our CI throughput.” Fine. Industrialize the pipeline. Parallel builds. Aggressive caching. Feature flags. Canary deploys. These are 2015 technologies, not 2035 speculation.

“We don’t know who owns what code.” Fine. Industrialize ownership.

CODEOWNERSfiles are free. Architectural decision records are free. “We don’t know who owns this” is a choice, not a condition.“Fixing things breaks things.” Fine. Industrialize your test coverage and your rollback. If your fix is a micro-change behind a feature flag with a canary that auto-reverts on error-rate regression, the blast radius of a bad fix is seconds of partial degradation for a small traffic slice. That is not the same category of risk as a two-hour outage. Treating them as equivalent is how you justify the status quo.

Every one is an argument for building the preconditions, not for refusing to remediate. A hospital that said “we can’t afford to sterilize all the instruments” would be closed within a week. We accept the equivalent argument in AppSec because we grew up inside it.

The honest name for this refusal is laziness — organizational laziness. It is more comfortable to have the same “we have to prioritize” meeting every quarter than to do the multi-year work of building the system that makes the meeting obsolete. The meeting produces slides. The system produces nothing visible for two years, then suddenly produces a company that fixes any vulnerability in hours and ships through it without blinking. Guess which one gets funded.

The tail is where the attackers live

The logarithmic curve measures individually attributable risk. At the tail, the honest answer is “very little” — in isolation. Real attacks are never in isolation. Real attacks are chains.

SolarWinds was a) a compromised build pipeline, plus b) a legitimate signing certificate, plus c) a dormant backdoor with a 12-day incubation, plus d) weak segmentation across 18,000 downstream organizations, plus e) EDR evasion. Equifax was a) an unpatched Struts CVE with a fix already available, plus b) an expired TLS certificate that silenced monitoring for nineteen months, plus c) a flat network exposing 48 databases. Target was a) a stolen HVAC contractor credential, plus b) an exposed vendor portal, plus c) lateral movement, plus d) POS malware, plus e) an unmonitored FTP exfiltration path.

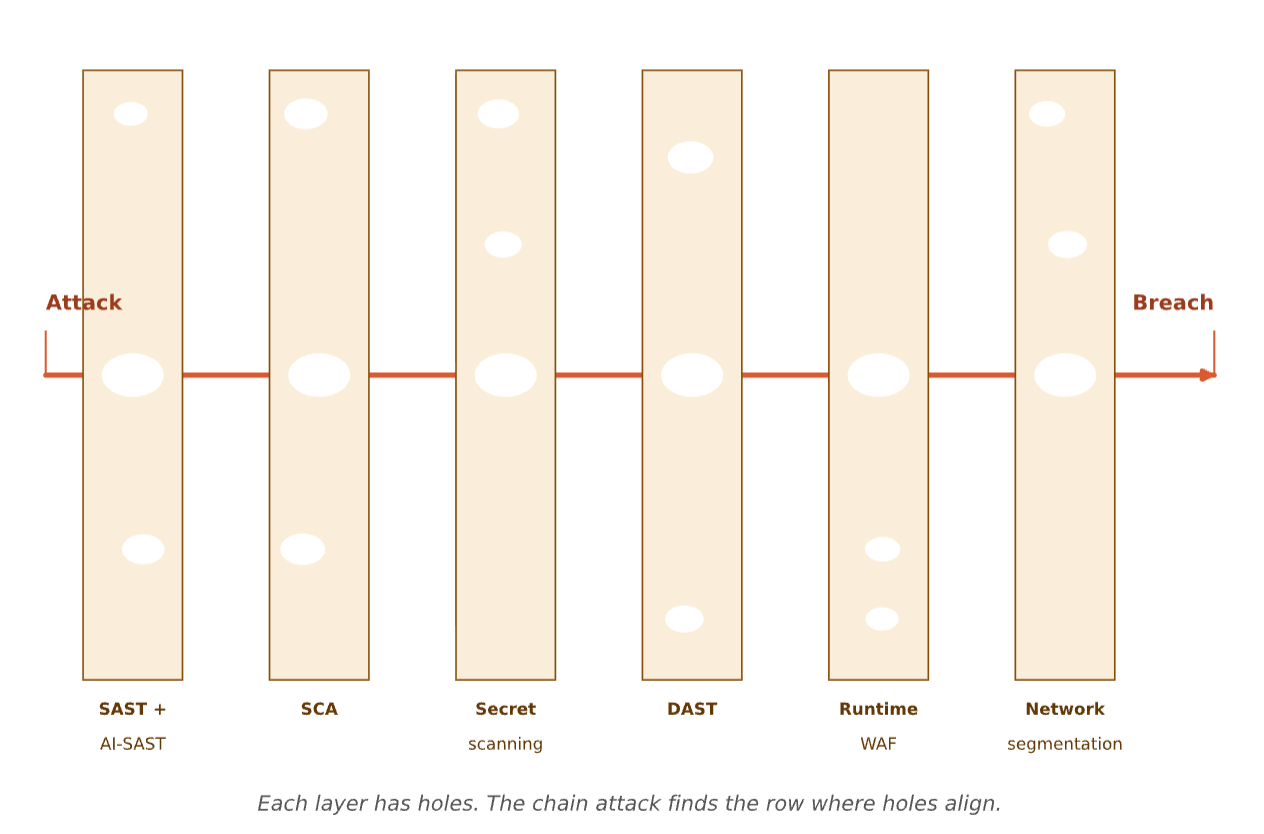

None were caused by a vulnerability labeled “critical” in isolation. Every one was an alignment of mediums and lows that, individually, any CISO under the logarithmic doctrine would have deprioritized — and, quite plausibly, did. James Reason called this the Swiss cheese model: layers of defense each have holes, accidents happen when the holes line up. You cannot predict which will align. The only robust defense is reducing the total count of holes across all layers — a population strategy, fundamentally incompatible with cherry-picking criticals.

Other high-stakes industries are decades ahead on one more thing. In aviation, when something almost goes wrong, pilots report to the Aviation Safety Reporting System, run by NASA at arm’s length from the FAA so career consequences don’t chill reporting. Anonymized, aggregated, published. A near miss in Seattle becomes a training bulletin in Singapore next month. Banks share fraud signals the same way: a scam hitting one bank at 2 AM reaches the others by end of business.

Security does none of this. An incident has to be regulated into disclosure — SEC 4-day rules, GDPR notifications — and what gets shared is the sanitized post-mortem of an actual breach, months late, written by lawyers minimizing liability. The near miss — the attempted intrusion that failed, the misconfiguration caught before exploitation — sits in an internal Jira labeled “not exploited, closed,” and that is the end of its epistemological life. Every company is its own first victim of every attack pattern.

If you cannot learn from other organizations’ near misses, you have to assume any finding in your environment, however mundane, could be the first link in a chain someone else has already seen but cannot tell you about. Under those conditions, remediating everything is not paranoia — it is the only epistemically defensible strategy.

The detection paradox, the factory ahead

An uncomfortable observation about the industry I make my living in. The last five years have been a gold rush of detection — AI-SAST with semantic context, reachability analysis, SCA tracing transitive dependencies, secret scanners correlating git history, DAST exercising real auth flows, exploitability engines chaining findings. Real progress. The most significant advance in security tooling since the invention of the scanner.

And it has produced almost no improvement in the rate at which vulnerabilities actually get fixed.

Here is the paradox: if detection is precise and exploitability analysis is accurate, the two necessary inputs to an autonomous patcher are already in place. A precisely located flaw, with a precisely understood attack path, in a precisely bounded region of code is a fully-specified input for a remediation agent. The fact that the industry invested so heavily in knowing exactly what is wrong, where, and how it would be exploited — and has not closed the loop to therefore, here is the patch — is not a technical limitation. It is a failure of imagination.

Every piece exists in fragments. Precise detection. Exploitability analysis. Autonomous coding agents. Elastic CI. Feature flags. Canary deploys. Auto-rollback. Unified backlogs. The pieces are on the shelf. Assembling them is hard engineering work, but it is not research — it is assembly. Someone will assemble it. The question is whether that happens inside the current AppSec industry or to it.

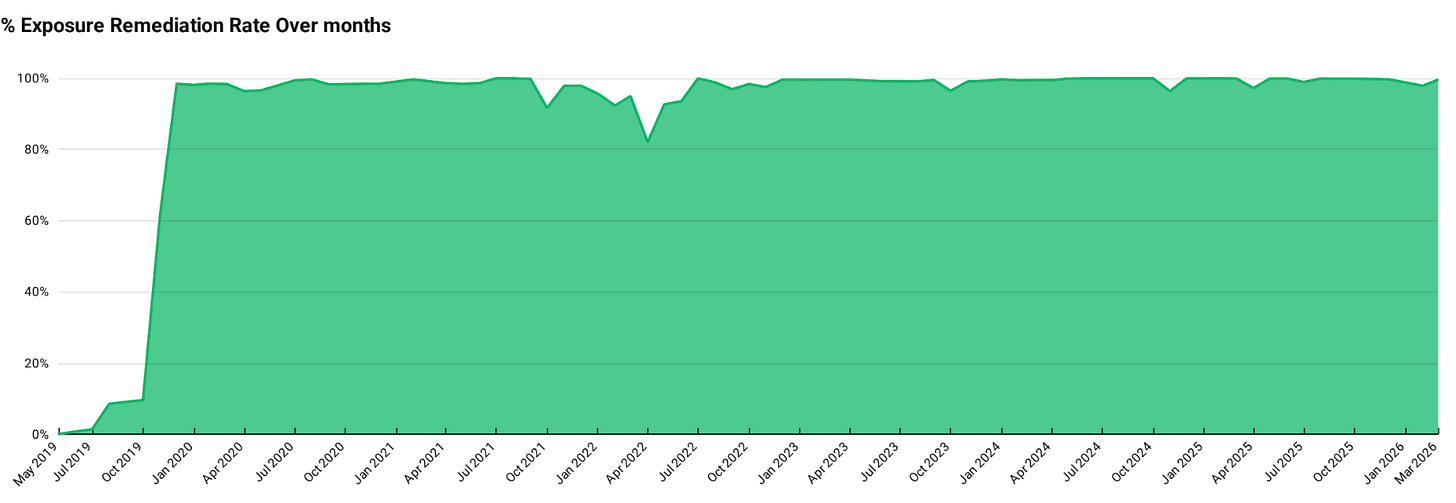

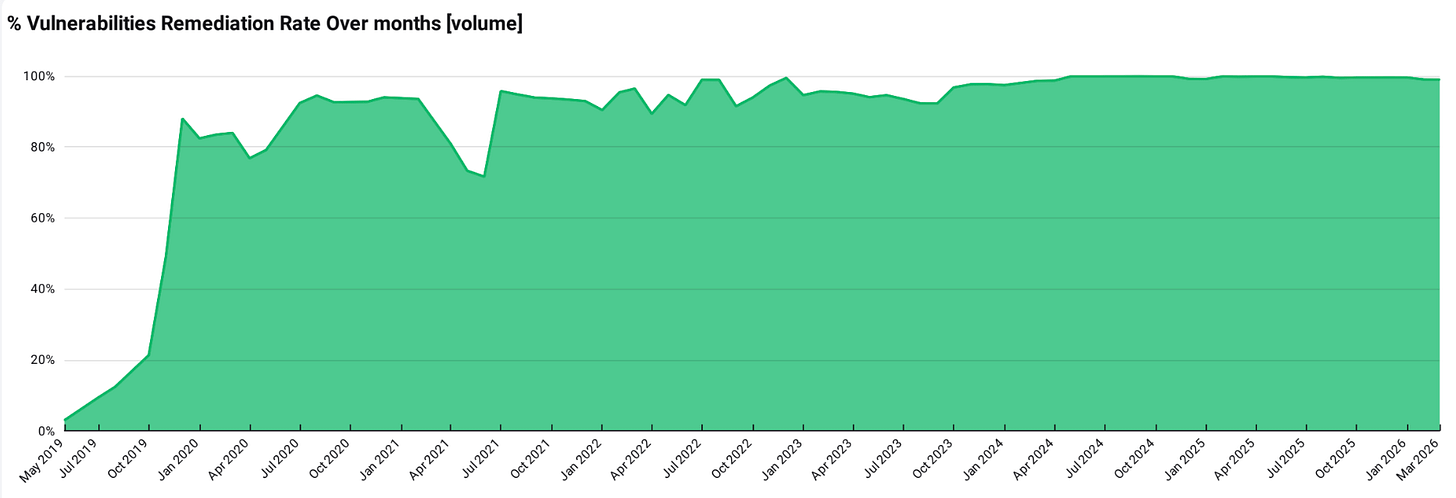

If you are running security today, the question on your desk is not “which of my 3,847 findings matter most.” It is: how do I get my organization to the point where that question is no longer interesting? How do I unify the backlog, shrink the fix into a micro-change, build the rollback safety net, get detection precise enough and the pipeline fast enough that the answer to every finding is simply “yes, and it’s already in progress”?

The answer to “which ones should we fix” is all of them. What you should be asking is what stops me from fixing all of them? — and kill those obstacles one by one, with the seriousness you would bring to a production outage. Every obstacle is a decision to run a workshop instead of a factory. To stay in 1975. To keep having the meeting.

Toyota took thirty years. Aviation took seventy. AppSec will probably take a decade, and most organizations will not make it. The ones that move first will build structural advantage nobody catches up to.

Prioritize the order. Remediate everything. Build the factory.